We invoke the webdriver and specify which browser we want to use.

Now this is how selenium interacts with a browser. To import the necessary package we have to import the webdriver class. The good news is that it’s fairly simple to pick up the basics! Selenium is actually a testing interface but has grown use in those who require to do web scraping.

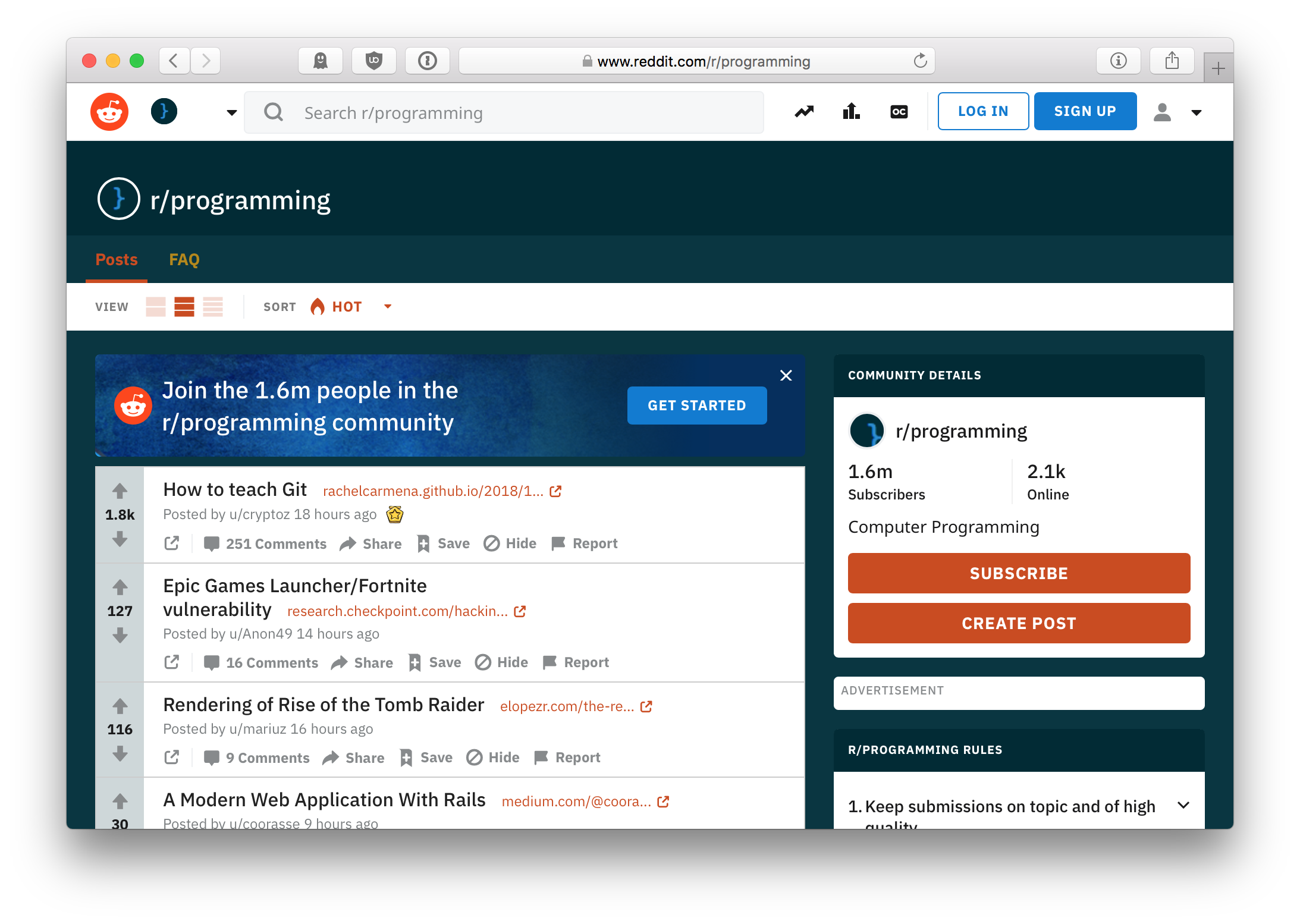

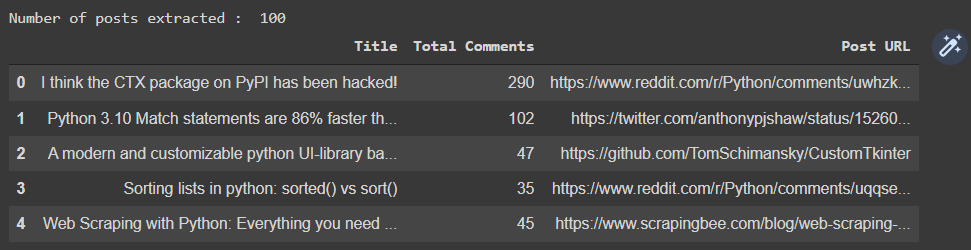

To do this within python we use the package Selenium. Web scrapping javascript requires us to try automate how the browser interacts with the website. Scrolling down this response we can see there is no information that is useful to us! So what is the next step to getting the data we want ? Output: '\n var _SUPPORTS_TIMING_API = typeof performance = \'object\' & !!performance.mark & !! asure & !!performance.getEntriesByType \n function _perfMark(name) \n Now the response we get back is a lot of javascript. You can look up the status codes here for further details.īut lets look deeper at this, we can use the text method of requests package to look at the response we get back. For those not used to HTTP status codes, the code 200 means we have generated a response from the server. Lets take a look at what happens import requests url = ' ' requests.get(url)Īwesome! We have the response we want. The simplest way to get information from a website is using a package called ‘Requests’, this allows us to make HTTP requests to the servers of reddit and gain the HTML code that we want. The next challenge for webscraping Reddit is that it has a lot of interactive elements on the page, which invariably means javascript is being used. Specifying ‘Daily Discussion Thread’ unfortunately gives us the image you see below. The threads we are interested in is actually always the 2nd item on the list. When doing any web scrapping project it is important to get used to the website you want to get data from. R/wallstreetbets: Like 4chan found a Bloomberg TerminalIf we do a search for Daily Discussion using the Reddit search function we come across this Reddit Search function : search results – flair:”Daily Discussion” Having a list of 1400 stock tickers in the program will certainly make the code much more bulky! Getting data from Reddit You may ask well why are we doing that ? When dealing with a large set of data, it can be useful sometimes to output this into a text file and subsequently call upon this file to generate the list. I created a simple program to scrape these tickers from an online program (Sharepad) but you can grab the text file here so most of the work is done for you. The US market has over 1476 companies on some form of stock exchange. So with that, let’s tackle these one at a time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed